Intro: A Tale of 2 Servers, UDP Ports, and K3s

Table of Contents

The Unintended Cluster Origin #

It all started with an incident that was somewhat… ironically amusing.

I had a web project that needed to be deployed urgently. Instead of renting a VPS online as usual, I texted a senior friend in the industry to ask if he had any spare servers to let me use. He threw me the server details on the spot, enthusiastic, no hesitation.

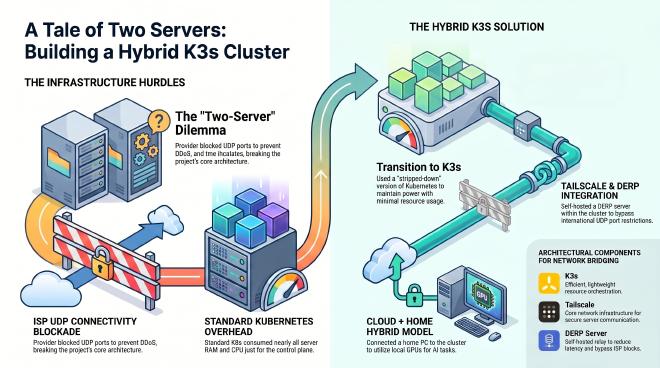

But life is not a dream. During the infrastructure setup, I discovered that this server had its inbound and outgoing UDP connectivity completely blocked by the ISP. Unfortunately, my project’s architecture absolutely required this protocol. Anyone deep in infra knows the drill: ISPs block UDP by default to keep DDoS amplification off their pipes. Unable to intervene from the OS side, my friend had to open a ticket directly with the provider’s technical support to request opening the port, leaving us waiting for their resolution.

Faced with the project’s time pressure and unable to wait any longer, I bit the bullet and used my own money to buy a brand new server from another provider to keep up with the schedule.

The next morning, technical support reported that the ticket was resolved. However, they could only open UDP for domestic IP ranges; international traffic remained completely blocked. But what shocked me even more was realizing the truth: It turns out the server with the blocked port last night wasn’t an old spare one he had; it was a brand new server he had quietly bought out of his own pocket to rent to me. He wanted absolutely no extra fee and simply meant to help me get the project done.

The brotherly enthusiasm combined with the “bad luck” of hitting a network policy right out of the box created an ironic situation. Naturally, I couldn’t refuse such kindness, so I accepted it.

And just like that, from a guy sweating to find a place to host a web app, I suddenly had 2 servers in my hands.

Letting one server carry all the work while a beefier box sits idle is an unacceptable waste of resources. A plan immediately popped into my head: “Why not connect these two machines into a cluster, so they can share the load, resources, and provide redundancy for each other?”

That was the origin of this series. I turned to Kubernetes – the gold standard of distributed systems. But right on the first day of deployment, it splashed cold water on my face: It was way too heavy! Just to run the control plane, it consumed almost all the RAM and CPU of the server, leaving very little actual space for the applications.

The solution appeared in the form of K3s – a version of Kubernetes that has been stripped of its excess fat, removing millions of lines of unnecessary code while fully retaining the core power of a distributed system.

This is my journey stepping from the world of Docker into the world of Kubernetes.

Actual Architecture

For those curious about the actual architecture I built to solve this bizarre network problem:

- Because international UDP ports were blocked, and even with outgoing ports opened it could only be routed domestically, the internal VPN connection dropped miserably forcing a fallback to TCP. Therefore, I decided to use Tailscale as the core network infrastructure.

- To bypass restrictions and reduce latency, I self-hosted a static DERP Server right inside the K3s cluster.

- After everything was connected, I hooked up my personal home PC to this cluster to handle AI tasks. Temporarily using my home GPU saved me a significant amount of money from renting extra cloud GPUs.

That’s right, a Hybrid Cloud cluster combining a cloud VPS and home hardware. This is also the first time I’ve genuinely rolled up my sleeves to “mold” Kubernetes for a personal project. Therefore, this series is not just an architectural diary, but also a practical, from-scratch K3s guide for those who accidentally on purpose have 2 servers.