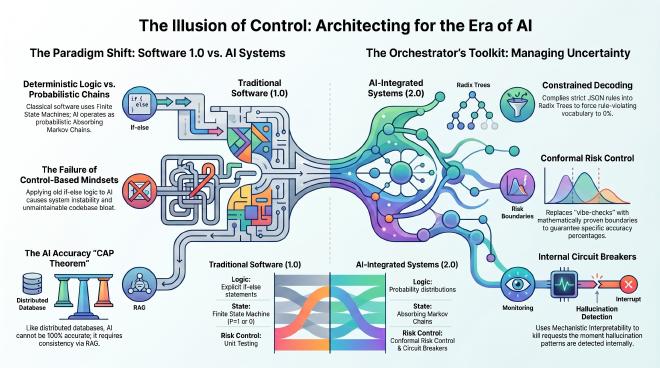

Paper 01: The Illusion of Control: System Design in the Era of AI

Table of Contents

I. The Limits of Traditional Programming #

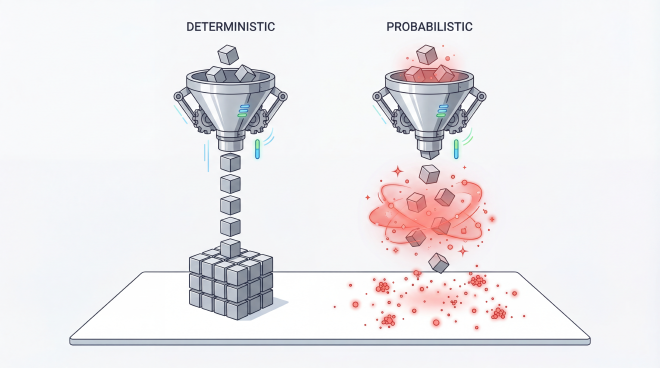

For decades, software engineering ran on a simple promise: write the logic, get the result. Same input, same output, every time.

Then we wired Large Language Models (LLMs) into core features — and the promise broke. The job stopped being about managing if-else statements and started being about orchestrating probability distributions. Keep the old control-first mindset, and the cracks turn into cascading failures the moment the system meets unfamiliar data.

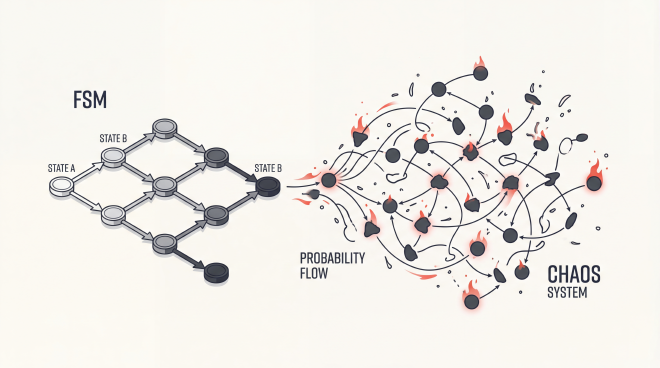

II. Fundamental Differences: FSMs vs. Absorbing Markov Chains #

Underneath the surface, the shift is mathematical:

- Classical Software 1.0 operates as a Finite State Machine. The transition from state A to state B is always absolute ( $P=1$ or $P=0$).

- Modern LLM Systems function as Absorbing Markov Chains1. The text generation process only halts when it converges on a single destination: the

<EOS>(End of Sequence) token.

Push AI into a system without that absorbing state in mind, and you get Degenerate Loops2 — the model stuck in a closed cycle, never reaching <EOS>. Hallucination, in this framing, isn’t a content error. It’s a system that can’t terminate.

And no, you can’t patch your way out with traditional code. Try to catch every edge case with another loop and the codebase swells until it’s unmaintainable.

III. Structural Parallels with the CAP Theorem #

Orchestrating LLMs feels strangely familiar to anyone who’s run Microservices: both ask you to make decisions without ever having 100% accurate state data.

In distributed computing, the CAP Theorem defines the physical constraints of databases. With AI, demanding an algorithm to always generate 100% accurate output is impossible. Instead, accuracy relies on probability:

- RAG and Conformal Prediction: Just as a database locks tables to ensure consistency, AI utilizes data retrieved via RAG to anchor itself to factual information.

- Byzantine Fault Tolerance: In a Multi-agent network, AI agents producing hallucinations can be viewed as malicious entities known as Byzantine Nodes. Cross-consensus mechanisms allow the system to determine the most reliable answer based on the total number of perfectly accurate votes.

IV. Controlling Output with Constrained Decoding #

The strongest architectural solution available today wraps a strict state machine around the probability chain — caging the uncertainty before it can leak out.

This mechanism is known as Constrained Decoding. Simply put, it compiles strict data rules like JSON or Regex into Radix Trees. During every loop where the AI generates the next word, this mechanism explicitly forces the probability of any rule-violating vocabulary to exactly $0\%$.

Pruning invalid vocabulary early prevents the system from wasting resources evaluating incorrect sequences, ensuring the output meets strict JSON format requirements without negatively impacting performance.

V. Tackling Overconfidence with Conformal Risk Control #

Another major challenge is the Calibration Paradox. Training AI via RLHF to sound fluent can inadvertently encourage the model to confidently output incorrect information.

Instead of relying on general metrics like Expected Calibration Error, engineers are adopting the Conformal Risk Control standard. This framework enforces a mathematically proven boundary on acceptable risk percentages. For example, ensuring that a RAG feature’s semantic error rate never exceeds 5%.

| |

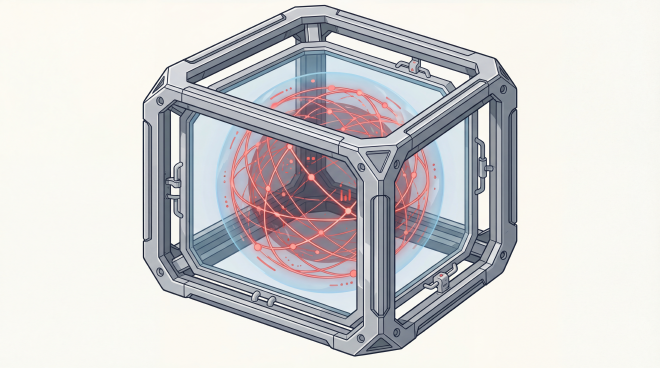

VI. Internal Network Risk Monitoring with Mechanistic Interpretability #

Measuring risk after the fact isn’t enough. The next move is proactive: Mechanistic Interpretability. Drop Sparse Autoencoders into the network as internal filtering grids, and you can dissect its “thought process” into independently readable feature groups — opening the black box from the inside.

This structure acts as an internal Circuit Breaker running in real-time. The API tier will proactively kill a text generation request the moment it detects an internal activation pattern associated with hallucination data, decisively stopping the flawed text generation long before that incorrect vocabulary is ever produced.

VII. Architectural Summary #

The role of the static system designer from Software 1.0 is rapidly giving way to the orchestration engineer of uncertainty:

- Constrained Decoding: Eradicate invalid outputs instantly using JSON Schema Regex boundaries.

- Conformal Risk Control: Stop relying on blind vibe-checks. Adopt this framework for mathematically proven accuracy guarantees.

- Internal Circuit Breakers: Catch systemic risks early from within the core neurons using Sparse Autoencoders before the end-user ever notices.

VIII. References #

- Karpathy, A. (2017). Software 2.0.

- Shannon, C. E. (1948). A Mathematical Theory of Communication.

- Outlines/SGLang (2025). Constrained Decoding Frameworks.

- Angelopoulos et al. (2024). Conformal Risk Control.

- Bricken et al. (2024). Towards Monosemanticity.

Absorbing Markov Chains: A state transition system where once an absorbing state (e.g., the

<EOS>token) is reached, the system cannot exit and the loop must terminate. ↩︎Degenerate Loops: A phenomenon where an AI’s generation flow gets stuck in an infinite loop, repeating a keyword sequence endlessly, preventing it from ever reaching its

<EOS>destination token. ↩︎