Paper 02: The Law of Chaos: Decoding Entropy in Distributed Architecture

Table of Contents

I. Prelude: The Paradigm Shift #

Last paper traced the move from pure logic to probability inside AI models. The catch is — that boundary doesn’t live only inside the model. It runs through every node of the distributed systems we already operate.

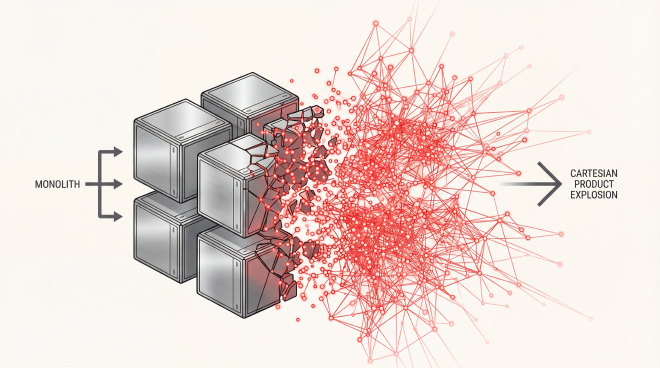

Here’s what’s easy to miss: breaking a Monolith into Microservices isn’t a code-splitting exercise. It’s a phase change. The system stops being Deterministic and starts being Probabilistic.

II. The Cartesian Trap: When Control Becomes an Illusion #

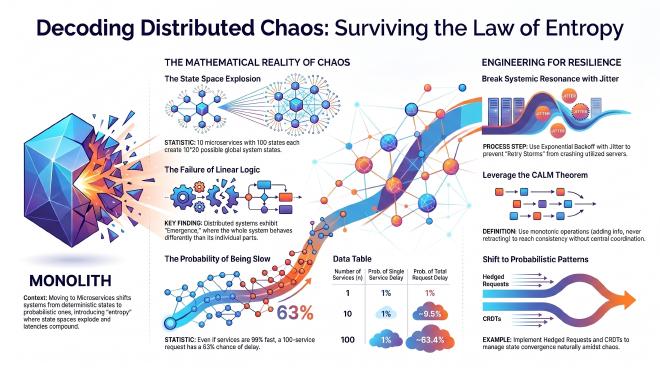

Why does traditional model checking fall apart at distributed scale? One word: explosion. The State Space doesn’t grow — it detonates.

In a Monolith, shared variables in memory are tightly coupled at compile-time. But when decomposed into $n$ microservices, the global state space $\Omega$ is no longer a simple sum; it explodes exponentially through the Cartesian Product:

$$ \Omega = |C| \times \prod_{i=1}^{n} |S_i| $$Here, $|S_i|$ represents the internal states of service $i$, and $C$ represents the state of the network communication channel—a probabilistic entity rife with potential issues like packet drops, delays, and duplicates. A system with just 10 services, each having 100 states, generates a baseline of $100^{10} = 10^{20}$ possible states. This is before even factoring in the near-infinite permutations of the network channel. This is truly where simplicity goes to die.

III. The Law of Non-linear Interaction: $f(\sum x_i) \neq \sum f(x_i)$ #

Complexity theory makes a sharp distinction between mechanically complicated and structurally complex systems. A watch is complicated, but its behavior is linear and predictable. A microservices network is complex because it inherently exhibits mathematical Emergence.

The core inequality governing this chaos is the failure of the superposition principle:

$$ f(x_1 + x_2 + \dots + x_n) \neq f(x_1) + f(x_2) + \dots + f(x_n) $$In a distributed environment, the whole is strictly greater than the sum of its parts ($V_{system} > \sum V_{components}$). Worse: Gunther’s Universal Scalability Law warns of retrograde scalability — past a point, coordination overhead so dominates that adding resources slows the system down.

IV. The Battle at the Probabilistic Edge: Tail Latency #

System architects are often misled by median p50 averages. But at scale, the actual user experience is governed by Tail Latency at the 99th percentile p99. As Jeff Dean and Luiz André Barroso famously explored in “The Tail at Scale”, even rare delays become certainties in distributed fan-outs.

Consider the math: If a single request must concurrently call $n=100$ microservices, and each service has only a 1% probability of being slow, the probability that the entire request will be slow is:

$$ P(\text{slow request}) = 1 - P(\text{fast})^n = 1 - (0.99)^{100} \approx 63.4\% $$At this scale, delays aren’t exceptions — they’re the baseline. The counter is Hedged Requests: fire a backup call to a replica the moment the primary misses its time budget. You can’t make slow requests faster, but you can make sure you’re never waiting on just one.

V. Tipping Points and Structural Collapse: Metastable Failures #

Chaos peaks at a particularly nasty failure mode: the system stays broken after the original trigger is gone. That’s a Metastable Failure — a stuck state that won’t self-heal.

The primary culprit is the dreaded Retry Storm. When latency exceeds connection timeout lengths, clients automatically retry. This artificially pushes server utilization ($\rho$) toward 100%. According to Kingman’s Formula, the expected wait time $E[W]$ is proportional to the utilization factor:

$$E[W] \propto \frac{\rho}{1 - \rho}$$As $\rho \to 1.0$, wait time approaches infinity. Following Little’s Law ($L = \lambda W$), as wait time $W$ explodes, the queue length $L$ exhausts all available threads and memory—triggering a cascading collapse.

To survive, we must use Exponential Backoff with Jitter mechanisms alongside robust Circuit Breakers. While static retries synchronize failures into a catastrophic thundering herd, Jitter introduces controlled randomness to break systemic resonance.

VI. Epilogue: Orchestrating the Chaos #

If Strong Consistency and synchronous coordination guarantee collapse at load, what survives? A different philosophy at the data layer.

Instead of imposing deterministic control top-down, the pragmatic move is to lean on the CALM Theorem — Consistency As Logical Monotonicity. It proves something deceptively simple: operations that only add information, never retract it, can run safely without any central coordinator.

This paradigm leads us to Conflict-Free Replicated Data Types natively known as CRDTs. By using commutative and idempotent algebra, we achieve state convergence naturally. For complex business transactions, we embrace eventual consistency through structural patterns like Sagas or the Outbox Pattern.

We do not fight Entropy. We learn to coordinate and orchestrate it.

VII. References & Further Reading #

- Dean, J., & Barroso, L. A. (2013). The Tail at Scale.

- Ameller, M., et al. (2024). Micro Services: Methodologies, Challenges, and Trends.

- Hellerstein, J. M., & Alvaro, P. (2020). Keeping CALM: When is Distributed Consistency Easy?.

- DoorDash Engineering (2022). Failure Mitigation for Microservices: An Intro to Aperture.

- Bhatti, S. (2023). Testing Distributed Systems Failures with Interactive Simulators.

- Golshani, H. (2021). Understanding CAP Theorem in Microservices.

- Montesi, F., et al. Modeling Cascading Failure Propagation through Dynamic Bayesian Networks.