Paper 03: Normal Accidents Theory & The Fallacy of Root Cause Analysis

Table of Contents

I. Introduction: The Newtonian Ghost in the Machine #

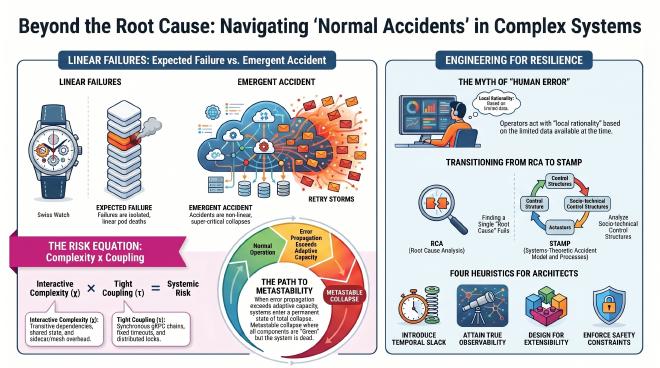

Engineers grew up on the Swiss-watch model. Something stops? A gear broke, or someone forgot to wind it. Isolate the part, understand the whole. Clean, satisfying, and — for simple or merely complicated systems — true.

It falls apart the moment you step into a Complex Adaptive System like a modern cloud-native architecture. John Allspaw and the STELLA Report drew the line: the invisible system below the line (code, hardware, networks) and the representations above the line (telemetry, dashboards) that operators actually touch never quite match. So when the outage hits, instinct sends us hunting for a Root Cause. This paper argues the hunt is a wild goose chase — the Root Cause is a phantom, and the accident itself is Normal.

II. Axiomatic Problem Statement: Failure vs. Accident #

Systemic collapse only makes sense once you separate two events that look alike from the outside but behave nothing like each other:

- Expected Failure: These are linear, bounded, and sub-critical component degradations. A pod runs out of memory and dies. The system’s adaptive capacity acts as a shock absorber; the orchestrator detects the failure, routes traffic elsewhere, and restarts the pod. The failure is isolated and deterministic.

- Emergent Systemic Accident: These are non-linear, unbounded, and super-critical metastable collapses. Components may be technically healthy and executing their logic perfectly, like initiating automated retries. However, across thousands of nodes, these localized survival mechanisms form a positive, destabilizing feedback loop—a Retry Storm. The accident emerges from the destructive resonance of safe behaviors interacting across tight couplings.

III. Theoretical Framework: Perrow’s Matrix & The Phenomenological Model #

Two variables drive the structural risk of any system: Interactive Complexity $\chi$ and Tight Coupling $\tau$.

1. The Structural Metaphor #

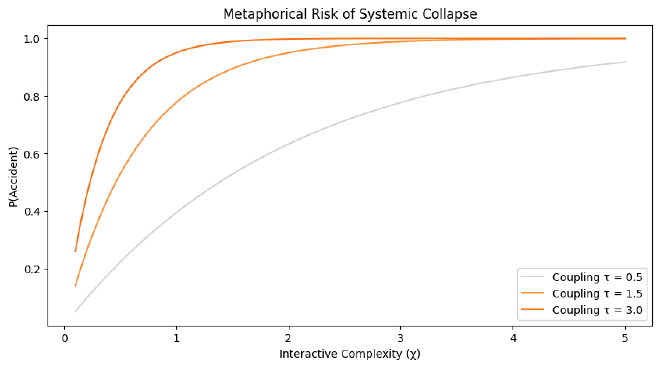

We can formalize this relationship using a macro-model of systemic risk. While Complex Adaptive Systems rarely follow smooth curves due to self-organized criticality, this serves as a robust phenomenological heuristic for architectural trade-offs:

$$P\_{acc} = 1 - e^{-\int (\chi \cdot \tau) dt}$$Nomenclature:

- Interactive Complexity $\chi$: The degree of non-obvious, non-linear feedback loops.

- Tight Coupling $\tau$: The inverse of temporal and functional slack.

- Note: This formula functions as a structural metaphor for hazard rates; as load and time ($t$) increase, the probability of encountering an emergent interaction approaches 1.

2. Architectural Isomorphism #

| Theoretical Variable | Software Architecture Metric |

|---|---|

| Interactive Complexity $\chi$ | Transitive dependencies + Shared state + Sidecar/Mesh overhead. |

| Tight Coupling $\tau$ | Synchronous gRPC chains + Fixed timeouts < P99 latency + Distributed locks. |

| Component Failure | Deterministic bug. Low impact, isolated by design. |

| System Accident | Metastable collapse where every component is Green but the system is dead. |

IV. Topological Dynamics: The Path to Metastability #

Once the error propagation rate outruns adaptive capacity $A$, the system tips into Metastability. The trigger is almost always the same: critical shared resources — thread pools, network buffers, database connection queues — saturate. With no slack left to absorb variance, the local fix turns into a global pathogen. An automated retry, perfectly safe on one node, becomes the storm on a thousand.

V. The “Human Error” Myth & Local Rationality #

The term “Human Error” is a Stop Rule: a convenient place to end an investigation to avoid confronting uncomfortable architectural truths.

- The Local Rationality Principle: As Sidney Dekker and Richard Cook argue, people do not come to work to do a bad job. Their actions were “locally rational” based on their goals, knowledge, and the limited representations available to them “above the line” at the time.

- Graceful Extensibility: A concept pioneered by Dr. David Woods, this is the ability of a system to challenge its own boundaries. In complex systems, human operators are not the cause of failure; they are the ultimate source of Resilience, providing the adaptive capacity that automation lacks.

VI. Beyond RCA: Transitioning to STAMP & CAST #

Traditional Root Cause Analysis leans on a chain-of-events model — find the first domino, fix it, done. In non-linear systems, that story doesn’t hold; the dominos don’t line up. Dr. Nancy Leveson’s STAMP (System-Theoretic Accident Model and Processes) replaces the chain with a control structure.

Instead of asking “What component failed?” or “Who is to blame?”, we use CAST (Causal Analysis based on STAMP) to analyze the Socio-Technical Control Structure. This structure includes not just automated orchestrators like Kubernetes or circuit breakers, but also Blunt End elements like organizational CI/CD gating, on-call policies, and alerting thresholds.

We ask:

- What were the safety constraints that were violated?

- Why was the Socio-Technical Control Structure inadequate to enforce these constraints over component interactions?

- How did the feedback loops (or representational mismatches) contribute to the loss of control?

VII. Quantitative Heuristic: Simulating The Tipping Point #

The simulation below isn’t predictive — it’s pedagogical. But it shows the shape of the problem: tighten a complex system, and the hazard curve doesn’t crawl. It snaps.

| |

VIII. Strategic Heuristics for the Architect #

- Introduce Temporal Slack: Convert synchronous dependencies into asynchronous event streams to reduce $\tau$ and prevent buffer saturation.

- Attain Observability, Not Just Telemetry: Bridge the gap between above the line representations and below the line reality.

- Design for Graceful Extensibility: Ensure that when the system reaches its boundary, it allows for human intervention rather than collapsing into a metastable state.

- Enforce Safety Constraints: Shift focus from component reliability to the integrity of the hierarchical control structure (STAMP), including both code and organizational policy.

IX. Conclusion #

Normal Accident Theory and STAMP teach us humility. We do not control Complex Adaptive Systems; we merely manage the socio-technical constraints that govern them. By accepting that accidents are an inherent property of our topology, we stop chasing the phantom of “Root Cause” and begin the real work of engineering for resilience.

X. References & Further Reading #

- Perrow, C. (1984). Normal Accidents: Living with High-Risk Technologies.

- Cook, R. I. (1998). How Complex Systems Fail.

- Leveson, N. (2012). Engineering a Safer World: Systems Thinking Applied to Safety.

- Dekker, S. (2006). The Field Guide to Understanding ‘Human Error’.

- Woods, D. D. (2018). The Theory of Graceful Extensibility: Basic Rules that Govern Adaptive Systems.

- SNAFUcatchers Consortium (2017). STELLA: Report from the SNAFUcatchers Workshop on Coping with Complexity.