Part 1: The Transition Guide from Docker to Orchestration

Table of Contents

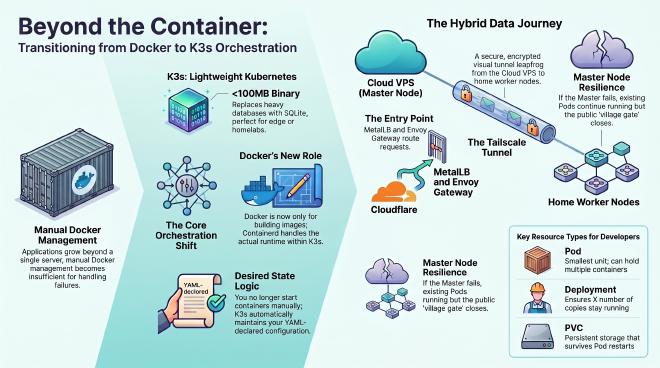

The Turning Point: From the Docker Box to a True System #

The intro’s network mishap aside — today we dig into the real protagonist quietly carrying this system behind the scenes: K3s.

If your day-to-day is SSHing into a VPS and running a handful of Docker containers, life feels smooth. Push the app to 3 or 4 servers and the illusion breaks fast — pure Docker simply isn’t enough. A broken cable, a power outage, or just a node catching fire — who will automatically move your application to a healthy node? That’s exactly when we need Orchestration, specifically the Kubernetes ecosystem.

But installing the full-blown Kubernetes is sweating work because it’s so bloated. Thankfully, we have K3s. This article will systematically break down the most essential concepts so you can confidently step into this world.

1. Why K3s? And Where Did Docker Go? #

In short: K3s is a lightweight Kubernetes distribution, compressed into a single binary file under 100MB. It strips out the legacy cloud drivers and swaps the heavy etcd database for SQLite. Perfect for personal homelabs, edge computing, or any distributed setup that wants tidiness without giving up the core power of Kubernetes.

There is also a hard truth that might mildly shock people transitioning from Docker: K3s (and modern Kubernetes in general) no longer cares for Docker.

- Docker’s only job now should be building images on your personal machine.

- Under the K3s infrastructure, the actual engine running your applications is Containerd.

- You will no longer directly type commands to start containers. Instead, you declare your desired state via YAML configuration files, and K3s will automatically call Containerd to pull the image and run it.

2. Naming the Resources: Don’t Get Overwhelmed #

To make it easier to swallow, I’ve divided K3s concepts into two groups. The first group involves configuration files you will write daily, and the second is handled by the system where you just need to grasp the logic.

Group 1: Developers / Operators Daily Bread

- Pod: The smallest component. Do not confuse 1 Pod with 1 Container. A Pod can contain multiple containers running together, sharing a common internal network. This means container A can talk to container B inside the same Pod straight via localhost.

- Deployment: The lifecycle manager. You tell it “I need 3 copies of this app,” and the Deployment ensures there are always 3 Pods running day and night, handling Rolling Updates along the way.

- Service (Svc): The internal router. It assigns a static IP and internal domain name so Pods can call each other without worrying about the ever-changing real IPs of the Pods.

- Ingress: The traditional routing concept acting as the outer gate (Layer 7) to receive traffic. In the default configuration, K3s usually ships with Traefik for this component.

- Gateway API: The new standard generation of Kubernetes routing, fixing all the flaws of Ingress by providing much more powerful sharing and routing management capabilities. In this series, instead of using the old Traefik, we will use Envoy Gateway — an excellent Gateway API implementation.

- ConfigMap & Secret: Decouples configurations and passwords from the image. Pods will read these data precisely when they boot up.

- PersistentVolumeClaim (PVC): The request for hard storage space so data doesn’t vaporize when a Pod dies.

Group 2: Infrastructure and Advanced Configurations

- ReplicaSet: Sits between the Deployment and Pod to maintain the exact replica count.

- LoadBalancer (ServiceLB): Responsible for opening the communication gate at the network layer (Layer 4). Because bare-metal servers don’t natively have Load Balancers like on the Cloud, K3s integrates a fallback tool called ServiceLB (Klipper) by default.

- MetalLB: A true Load Balancer solution for bare-metal. To make the system professional and control IPs better, we will disable the default ServiceLB and replace it with MetalLB. It gives your K3s cluster the ability to allocate real standalone IPs within the internal network just like the mechanisms of giant Cloud providers.

- Control Plane & Worker Node: The Master machine (Control Plane) is the brain making decisions, and the Agent machine (Worker) acts as the limbs running the Pods.

3. Data Flow: Tying Everything Together #

Let’s trace a request from a web browser into the system. The real money-maker of this entire design is how packets dive through the Tailscale tunnel:

- A visitor browses the web, their traffic passes through Cloudflare and heavily hits the Public IP of the Master node sitting out in the Cloud.

- At the Master node, MetalLB catches the packet at the network layer and immediately hands it over to Envoy Gateway.

- Envoy Gateway inspects the Host Header, recognizes the client wants

vinhmdev.com, and forwards the command down to the Service. - Here is where the magic happens: The Service realizes the targeted Pods are residing on a Worker node deep inside your house. The packets are securely encrypted and dive into the Tailscale tunnel (assisted by the DERP Server under strict NAT scenarios) to “leapfrog” from the Cloud VPS directly straight into your Home Worker machine for processing.

4. The Network Hub: Tailscale and DERP Server #

In the Hybrid Cloud architecture we are building, servers aren’t always sitting in the same room. To help them “see” each other securely without recklessly opening internet ports, we bring Tailscale into play.

- Tailscale: Creates a flat, secure Mesh VPN network. Every Node, whether in Saigon or Singapore, can communicate as if plugged into the same switch.

- DERP Server (Tailscale Relay): To ensure speed and avoid being affected by nasty carrier NAT layers, we self-host our own DERP server. It acts as a packet relay, helping nodes connect stably across geographical barriers and complex underlying networks.

5. Gearing Up for the Next Journey #

In the next post, we will roll up our sleeves and start installing. Aside from servers running a clean version of Ubuntu LTS and SSH Keys, keep these in mind:

- Disable defaults: When installing K3s, we will actively add parameters to disable Traefik and ServiceLB right from the get-go, making room for Envoy Gateway and MetalLB.

- Swap: Remember to run

sudo swapoff -aso K3s doesn’t hallucinate resource calculations. - Editor Skills: Get ready for vi or nano. SSHing into a server and dancing around YAML configurations with vi doesn’t just help you survive GUI-less environments, it is a highly practical and “addictive” experience (though mostly just copy and pasting).

[!NOTE]

What Happens if the Master Machine (Control Plane) Catches Fire?

In terms of Kubernetes basics: If the Master faints, you lose brain control (for example, you can’t type commands to spawn more Pods). However, the Pods that have been scheduled to run on the Agents (Worker Nodes) will not die. They will persistently stick to their machines and securely run in the background.

However, the harsh network reality right now: In this series’ architecture, we are pointing Cloudflare directly at the Master node’s public IP (because we aren’t using an independent external LoadBalancer). That inevitably makes the Master double as the traffic gateway. Therefore, if the Master stops breathing, the “village gate” collapses, and public internet users can no longer access the web. Ultimately, your apps deep inside the Worker Nodes are alive and well but hopelessly stranded with no outbound route to serve clients. In more professional setups later on, we resolve this critical weakness (Single Point of Failure) using External LBs or automated IP failover tricks.

[!WARNING]

A Bloody Warning: DO NOT install Docker and K3s on the same Master machine!

Absolutely do not install Docker and K3s side-by-side. These two ecosystems will tear each other apart, fighting over network ports and messing with each other’s routing tables and iptables. Unraveling this conflict mess is extremely exhausting and a waste of time. Under the hood, K3s already inherently comes with a super lightweight Containerd. Thus, your best bet is to prepare an absolutely “clean” server (uninstall Docker) before installing. As for the deep technical roots of why they clash, I’ll dissect that in a future post if I have the time!

See you in Part 2: Setting up a VPN with Tailscale.